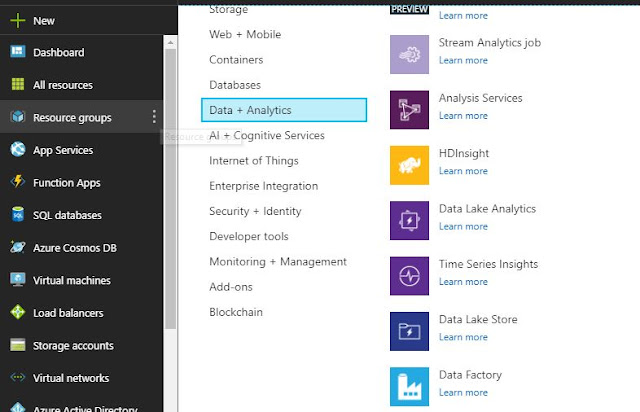

Azure Data

Factory, a cloud data integration service using that we can

1. Create ETL/ELT data-driven workflows to

process raw unorganised data from various data stores for data analysis

2. Transform the data by using compute services

such as Azure HDInsight Hadoop, Spark, Azure Data Lake Analytics, and Azure

Machine Learning

3. Automate data movement

An Azure

subscription can have one or more Azure Data Factory instances (or data

factories). Azure Data

Factory is composed of four key components.

1.

Pipeline

A data factory can have one or more pipelines.

A pipeline is a logical grouping of activities that performs a unit of work.

2.

Activity

Activities represent a processing step in a

pipeline. Azure Data Factory supports three types of activities: data movement

activities, data transformation activities, and control activities.

3.

Datasets

Datasets represent data structures within the

data stores that are used in activities as inputs or outputs.

4.

Linked services

Linked services are much like connection

strings. Linked service can represent a data store in case of data movement

activity or compute resource in case of data transformation / analysis.

No comments:

Post a Comment